Machine Learning with PyTorch and Scikit-Learn: A Comprehensive Guide

This comprehensive guide, available as a print or Kindle edition, expertly blends PyTorch’s simplicity with Scikit-Learn’s robust tools, offering a deep dive into machine learning.

Access PDF documentation and tutorials to unlock the revolutionary potential of deep learning, mastering this cutting-edge technology with ease and precision.

Machine Learning with PyTorch and Scikit-Learn, published by Packt Publishing, is a welcome addition for data science enthusiasts. This book, part of the bestselling Python Machine Learning series, is authored by a distinguished trio: Sebastian Raschka, Yuxi (Hayden) Liu, and Vahid Mirjalili.

Sebastian Raschka is a prolific contributor to machine learning software and a renowned author of numerous deep learning and data visualization tutorials. His expertise shines throughout the book, providing practical insights. Yuxi Liu and Vahid Mirjalili bring their own unique perspectives and skills, creating a well-rounded and comprehensive learning experience.

The book aims to guide readers through the intricacies of combining the strengths of both PyTorch and Scikit-Learn. Whether you’re seeking a PDF version for offline study or prefer the convenience of a Kindle edition, this resource promises to unlock the revolutionary potential of deep learning and equip you with the skills to master this cutting-edge technology.

Overview of Machine Learning with PyTorch and Scikit-Learn

This guide comprehensively explores the synergy between PyTorch and Scikit-Learn, two powerful Python libraries for machine learning. It delves into building neural networks, from simple architectures to more complex convolutional networks, leveraging PyTorch’s tensor computing capabilities. Simultaneously, it showcases Scikit-Learn’s role in streamlining machine learning pipelines, including data preprocessing and model evaluation.

The book covers key algorithms within Scikit-Learn, such as LinearSVC, and demonstrates how to integrate deep learning models built with PyTorch into existing Scikit-Learn workflows. Readers will gain a thorough understanding of each library’s strengths and weaknesses, enabling informed decisions about which tool is best suited for specific tasks.

Whether accessed as a PDF or through other formats, this resource provides a practical, hands-on approach to mastering modern machine learning techniques, bridging the gap between traditional and deep learning methodologies.

Why Choose PyTorch and Scikit-Learn?

Selecting PyTorch and Scikit-Learn offers a compelling combination for machine learning practitioners. PyTorch, while competing with TensorFlow, has rapidly gained popularity due to its dynamic computation graph and Pythonic style, making it ideal for research and rapid prototyping. Its simplicity is highlighted in this comprehensive guide, often available as a PDF.

Scikit-Learn excels in traditional machine learning tasks, providing efficient tools for data preprocessing, model selection, and evaluation. Its well-defined API and extensive documentation make it accessible to beginners, while its performance is suitable for many real-world applications.

Combining these libraries allows leveraging the strengths of both: PyTorch for complex deep learning models and Scikit-Learn for streamlined workflows and robust evaluation. This synergy empowers data scientists to tackle a wide range of machine learning challenges effectively.

PyTorch Fundamentals

PyTorch, a Python-based tensor computing library, forms the foundation for deep learning. Explore tensors, autograd, and neural network building blocks – detailed in available PDF guides.

Understanding Tensors in PyTorch

Tensors are the fundamental building blocks of PyTorch, akin to NumPy’s arrays but with the added benefit of GPU acceleration. They serve as the core data structure for representing and manipulating numerical data within neural networks. Understanding tensors is crucial for effectively utilizing PyTorch for machine learning tasks;

These multi-dimensional arrays enable efficient computation, especially when dealing with large datasets. PyTorch provides a rich set of functions for creating, manipulating, and performing operations on tensors. Resources, including PDF documentation, detail warm-up exercises using NumPy to transition seamlessly into PyTorch tensor operations.

You can define tensor shapes, data types, and even specify whether the tensor should reside on the CPU or GPU. Mastering tensor manipulation is key to building and training complex deep learning models. The official PyTorch documentation and various online tutorials offer comprehensive guidance on this essential topic, often available in convenient PDF format for offline access.

Autograd: Automatic Differentiation

Autograd is PyTorch’s automatic differentiation engine, a cornerstone of deep learning model training. It efficiently computes gradients of complex functions, essential for optimizing neural network parameters via backpropagation. This eliminates the need for manual gradient calculations, significantly simplifying the development process.

PyTorch tracks all operations performed on tensors with requires_grad=True, building a computational graph. During backpropagation, Autograd traverses this graph to calculate gradients. Accessing detailed explanations, often in PDF format, clarifies how Autograd functions and integrates with tensor operations.

Understanding Autograd is vital for customizing training loops and implementing advanced optimization algorithms. Numerous online tutorials and the official PyTorch documentation provide practical examples and in-depth explanations. Mastering this feature unlocks the full potential of PyTorch for building and training sophisticated machine learning models, streamlining the entire process.

Building Simple Neural Networks with PyTorch

PyTorch simplifies neural network construction through its intuitive modular design. Defining network layers using nn.Module allows for creating custom architectures with ease. These layers, such as linear and convolutional layers, are readily available within PyTorch’s nn package.

Creating a basic network involves defining the forward pass, specifying how input data flows through the layers. Optimization is then achieved using algorithms like Stochastic Gradient Descent (SGD), readily implemented with PyTorch’s optim package. Detailed tutorials, often available as PDF guides, demonstrate these concepts with practical examples.

PyTorch facilitates rapid prototyping and experimentation, making it ideal for both beginners and experienced practitioners in machine learning. The framework’s flexibility allows for building networks ranging from simple multi-layer perceptrons to complex deep learning architectures, all while leveraging Autograd for efficient gradient computation.

Scikit-Learn Essentials

Scikit-Learn plays a vital role in machine learning pipelines, offering tools for data preprocessing, model selection, and evaluation – often detailed in PDF guides.

Scikit-Learn’s Role in Machine Learning Pipelines

Scikit-Learn establishes itself as a cornerstone within comprehensive machine learning pipelines, expertly handling crucial stages beyond deep learning’s scope. Its strengths lie in streamlined data preprocessing – cleaning, feature scaling, and dimensionality reduction – preparing datasets for optimal model performance. The library provides a diverse collection of algorithms, including LinearSVC, facilitating efficient classification tasks.

Furthermore, Scikit-Learn excels in model selection and evaluation, offering tools like cross-validation and various metrics to assess performance rigorously. Many resources, including detailed PDF documentation, showcase its capabilities. It seamlessly integrates with other libraries, including PyTorch, allowing for hybrid workflows where deep learning models are deployed and evaluated using Scikit-Learn’s robust infrastructure. This synergy enables a holistic approach to machine learning, leveraging the strengths of both frameworks for enhanced results and streamlined development.

Key Algorithms in Scikit-Learn (LinearSVC, etc.)

Scikit-Learn boasts a rich repertoire of algorithms catering to diverse machine learning tasks. LinearSVC, a standout, provides efficient linear support vector classification, ideal for text categorization and similar problems. Beyond LinearSVC, the library includes algorithms for regression, clustering, dimensionality reduction, and model selection. These encompass Logistic Regression, Support Vector Machines with various kernels, Decision Trees, Random Forests, and K-Means clustering.

Detailed PDF documentation accompanies each algorithm, explaining parameters, usage, and underlying principles. Scikit-Learn’s consistent API simplifies experimentation and comparison. The library’s focus on practicality and ease of use makes it accessible to both beginners and experienced practitioners. Its algorithms are often used in conjunction with deep learning models built in PyTorch, creating powerful hybrid solutions. Understanding these core algorithms is fundamental to building effective machine learning pipelines.

Data Preprocessing with Scikit-Learn

Scikit-Learn excels in data preprocessing, a crucial step before applying machine learning algorithms. It offers tools for handling missing values, feature scaling (StandardScaler, MinMaxScaler), and categorical data encoding (OneHotEncoder, LabelEncoder). These transformations ensure data quality and improve model performance. Pipelines allow chaining preprocessing steps with model training for streamlined workflows.

Comprehensive PDF documentation details each preprocessing technique, including parameters and best practices. Scikit-Learn simplifies tasks like data normalization and standardization, vital for algorithms sensitive to feature scales. This preprocessing is often applied before feeding data into PyTorch models, ensuring optimal training. Proper data preparation significantly impacts model accuracy and generalization ability. Mastering these techniques is essential for any machine learning practitioner, and the library’s resources provide a solid foundation.

Combining PyTorch and Scikit-Learn

PDF resources demonstrate seamless integration, utilizing Scikit-Learn for data preparation and evaluation while leveraging PyTorch’s deep learning capabilities for advanced modeling tasks.

Integrating Deep Learning Models (PyTorch) into Scikit-Learn Workflows

Successfully bridging the gap between traditional machine learning and deep learning is a core strength highlighted in available PDF guides. These resources detail how to encapsulate PyTorch models as estimators within Scikit-Learn pipelines. This allows leveraging Scikit-Learn’s cross-validation, grid search, and other utilities for robust model evaluation and hyperparameter tuning.

The process often involves creating a wrapper class that conforms to the Scikit-Learn estimator interface. This wrapper handles the conversion of data between Scikit-Learn’s expected format (NumPy arrays) and PyTorch’s tensor format. PDF documentation emphasizes the importance of careful data type handling during this conversion to avoid unexpected errors. Furthermore, it showcases how to utilize Scikit-Learn’s preprocessing tools to prepare data before feeding it into the PyTorch model, streamlining the entire workflow.

This integration enables a cohesive machine learning pipeline, combining the strengths of both libraries for optimal performance and maintainability, as detailed in comprehensive PDF tutorials.

Utilizing Scikit-Learn for Data Preparation and Evaluation

Scikit-Learn excels in data preprocessing, a crucial step often detailed in machine learning with PyTorch and Scikit-Learn PDF guides. Techniques like standardization, normalization, and feature scaling, readily available in Scikit-Learn, significantly improve model performance. These preprocessing steps are applied before data enters the PyTorch model, ensuring optimal input conditions.

PDF documentation highlights Scikit-Learn’s robust evaluation metrics – accuracy, precision, recall, F1-score, and more – for assessing model performance. These metrics provide a comprehensive understanding of the model’s strengths and weaknesses. Furthermore, Scikit-Learn’s cross-validation tools, thoroughly explained in tutorials, enable reliable performance estimation by splitting the data into multiple folds.

The combination allows for rigorous testing and validation, ensuring the PyTorch model generalizes well to unseen data. PDF resources emphasize that proper data preparation and evaluation are paramount for building reliable and effective machine learning systems.

Comparison of PyTorch and Scikit-Learn Capabilities

PyTorch shines in deep learning, offering flexibility for complex neural network architectures, detailed in machine learning with PyTorch and Scikit-Learn PDF resources. Its dynamic computation graph allows for intricate model designs and research-oriented experimentation. Conversely, Scikit-Learn excels in traditional machine learning algorithms – linear models, SVMs, decision trees – providing efficient implementations and streamlined workflows.

PDF guides demonstrate that Scikit-Learn prioritizes ease of use and rapid prototyping, while PyTorch demands a deeper understanding of tensor operations and automatic differentiation. PyTorch handles GPU acceleration seamlessly, crucial for large datasets, while Scikit-Learn’s scalability is more limited.

Ultimately, the choice depends on the task. For deep learning, PyTorch is preferred; for traditional ML, Scikit-Learn is often more efficient. Many workflows, as shown in tutorials, integrate both libraries for a synergistic approach.

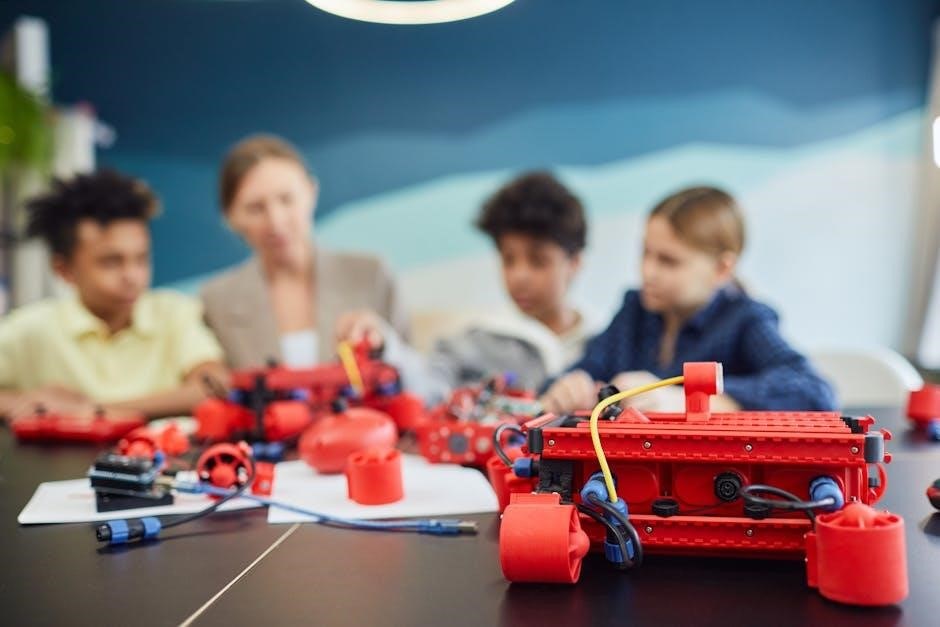

Resources and Tutorials

Explore official documentation for PyTorch and Scikit-Learn, alongside numerous online tutorials and courses. Downloadable PDF guides enhance your machine learning journey!

Official Documentation for PyTorch and Scikit-Learn

Delving into the official documentation is paramount for mastering PyTorch and Scikit-Learn. Both libraries boast exceptionally well-maintained and comprehensive resources, serving as the definitive source of truth for all functionalities. For PyTorch, the official website provides detailed API references, tutorials covering everything from tensor operations to building complex neural networks, and extensive guides on autograd and distributed training.

Scikit-Learn’s documentation is equally valuable, offering clear explanations of algorithms, data preprocessing techniques, model evaluation metrics, and practical examples. You can find PDF documentation for older versions, providing a historical perspective on the library’s evolution. These resources are crucial for understanding the underlying principles and best practices, enabling you to effectively apply these tools to your machine learning projects. Accessing these official sources ensures you’re utilizing the libraries correctly and staying up-to-date with the latest features and improvements.

Online Tutorials and Courses for Both Libraries

Numerous online tutorials and courses cater to learners of all levels, enhancing proficiency in PyTorch and Scikit-Learn. Platforms like Coursera, Udacity, and edX offer structured courses covering foundational concepts to advanced techniques, often including hands-on projects. YouTube hosts a wealth of free tutorials, ranging from beginner-friendly introductions to specialized topics like convolutional neural networks and support vector machines.

Python.org itself provides a helpful tutorial, serving as a solid starting point. Many blogs and websites also offer detailed guides and code examples. Searching for “machine learning with PyTorch and Scikit-Learn PDF” can uncover supplementary materials and course notes. These resources complement the official documentation, providing alternative explanations and practical demonstrations, accelerating your learning journey and enabling you to build impactful machine learning applications.

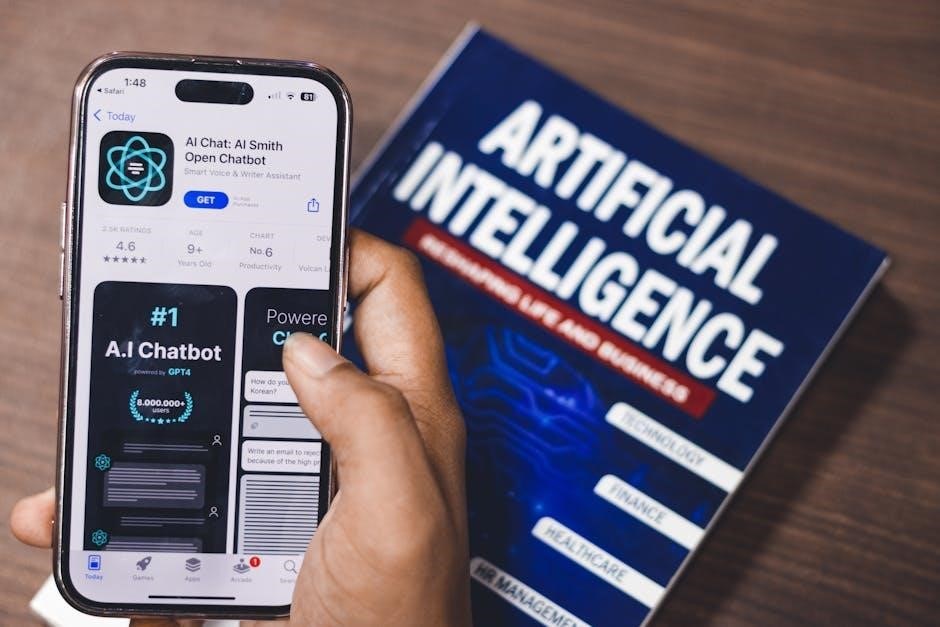

Accessing PDF Documentation and Guides

Comprehensive PDF documentation is readily available for both PyTorch and Scikit-Learn, serving as invaluable references for developers and researchers. The official Scikit-Learn website provides downloadable PDF versions of its user guide and API reference, detailing algorithms, data preprocessing techniques, and model evaluation metrics.

While PyTorch primarily offers online documentation, users can generate PDF versions of specific sections or utilize third-party tools to create complete offline copies. Searching online for “machine learning with PyTorch and Scikit-Learn PDF” yields access to supplementary guides, tutorials, and even complete e-books. These resources often consolidate information, offering a convenient offline learning experience. Older versions’ PDF documentation can also be found online, providing historical context and insights into the evolution of these powerful libraries.